नेपालमा बुकमेकर र अनलाइन क्यासिनो Mostbet

मोस्टबेट, एक प्रसिद्ध बुकमेकर र अनलाइन क्यासिनो, नेपालमा जुवा खेल्नेहरु माझ लोकप्रियता हासिल गरेको छ। यो एक ठाउँ हो जहाँ जुवाको जुनूनले प्रविधिको सुविधालाई पूरा गर्दछ, खेल र खेल सट्टेबाजीको विस्तृत श्रृंखला प्रदान गर्दछ। प्रयोगकर्ताहरूले मोस्टबेटलाई यसको विश्वसनीयता, आकर्षक बाधाहरू र ठूला जीतहरूको सम्भावनाको लागि मूल्यवान गर्छन्। यो प्लेटफर्मले नेपालमा जुवा प्रेमीहरूलाई सुरक्षित र रोमाञ्चक गेमिङ ठाउँ प्रदान गर्दछ।

Mostbet को बारेमा

लोकप्रिय बुकमेकर र अनलाइन क्यासिनो मोस्टबेटले नेपाली बजारमा भरपर्दो र आकर्षक गेमिङ पोर्टलको रूपमा आफूलाई स्थापित गरेको छ। यसले सुरक्षा र निष्पक्षताको ग्यारेन्टीको साथ प्रयोगकर्ता-अनुकूल इन्टरफेसको संयोजन गर्दै खेल सट्टेबाजी र क्यासिनो खेलहरूको विस्तृत श्रृंखला प्रदान गर्दछ। मोस्टबेटले आफ्नो उदार बोनस, उच्च समस्या र गुणस्तरीय ग्राहक सेवाका साथ नेपालका खेलाडीहरूलाई आकर्षित गर्छ।

अब आफ्नो बोनस प्राप्त गर्नुहोस्Mostbet लाइसेन्स

MostBet द्वारा प्राप्त कुराकाओ इजाजतपत्र यसको वैधता र विश्वसनीयता को एक महत्वपूर्ण पुष्टि हो। यो कुराकाओका अधिकारीहरूद्वारा जारी गरिएको हो, जसले जुवाको क्षेत्रमा कडा मापदण्ड र नियमहरूको पालनाको ग्यारेन्टी दिन्छ। यो इजाजतपत्रले खेलाडीहरूको सुरक्षा र खेलहरूको अखण्डता सुनिश्चित गर्दछ, र उनीहरूको रुचिहरूको पनि सुरक्षा गर्दछ। MostBet कम्पनीले आफ्ना ग्राहकहरूलाई सबै आवश्यक मापदण्डहरू र कानुनी आवश्यकताहरूको पालना गर्दै जुवा मनोरञ्जनको संसारमा उत्कृष्ट अनुभव प्रदान गर्ने प्रयास गर्दछ।

दर्ता गर्नुहोस्२०२४ को आईपीएल सिजन सुरु गर्नुहोस्: भारतको प्रिमियर बेटिंग पोर्टल, मोस्टबेटसँग संलग्न हुनुहोस्

2024 इन्डियन प्रिमियर लिगले भारत भित्र प्रेमीहरूको दांव लगाउनको लागि एक निर्णायक अवसरको घोषणा गर्दछ, नेपालका सहभागीहरूलाई बेट्सको यो उत्सवमा भाग लिन न्यानो निमन्त्रणा प्रदान गर्दै, भारत भित्रको घटनाको स्थानलाई पर्वाह नगरी। आईपीएलको यो सिजनले पंटरहरूका लागि बाजी लगाउने सम्भावनाहरूको प्रशस्तता प्रकट गर्दछ। धेरै शर्त, बुकमेकिङ डोमेनमा एक अग्रगामी, प्रयोगकर्ता-अनुकूल मोबाइल जुत्ता इन्टरफेसको साथमा परिष्कृत बोनस फ्रेमवर्क प्रदान गरेर भारतीय र नेपाली पन्टर्स दुवैलाई पूरा गर्न आफ्नो दायरा फराकिलो बनाउँदैछ। मोस्टबेट डिजिटल पोर्टलमा नेभिगेट गरेर, दर्ता प्रक्रिया पूरा गरेर यो क्रिकेट बेटिंग यात्रा सुरु गर्नुहोस्, र प्रख्यात क्रिकेट गालामा डुब्दै।

Mostbet मा IPL 2024 मा शर्त| बुकमेकर | MOSTBET |

| ✅ आधिकारिक वेबसाइट | Mostbet.com |

| 📃 इजाजतपत्र | मोस्टबेट कुराकाओ लाइसेन्स नम्बर अन्तर्गत सञ्चालन गर्दछ। 8048/JAZ2016-065 |

| ⚽ खेल सट्टेबाजी | फुटबल (फुटबल), हक्की, क्रिकेट, बास्केटबल, टेनिस, MMA, eSports, अमेरिकी फुटबल, बेसबल, रेसिङ र अन्य धेरै |

| ▶ सट्टेबाजी प्रकारहरू | एकल शर्त, बहु शर्त, संचयक, एक्सप्रेस, प्रणाली, प्रत्यक्ष शर्त |

| 🔢 अनुपात प्रकारहरू | दशमलव, अंग्रेजी, अमेरिकी, हङकङ, इन्डोनेसियाली, मलय |

| 🎰 अनलाइन क्यासिनो | हो |

| 🎮 क्यासिनो खेलहरू | Aviator खेल, स्लटहरू, BuyBonus, Megaways, Drops & Wins, Quick Games, Card and Table Games |

| 🃏 लाइभ क्यासिनो | हो |

| 🎲 लाइभ क्यासिनो खेलहरू | लाइभ रूलेट, लाइभ पोकर, लाइभ ब्ल्याकज्याक, लाइभ बेकाराट, तीन पट्टी, अन्दर बहार |

| 🎁 बोनसहरू | जम्मा बोनस, Freespins, Freebet, बीमा शर्त, क्रिप्टो बोनस, एक्सप्रेस बोनस, हरेक शुक्रबार बोनस, 10% क्यासब्याक |

| 👉साइन अप गर्नुहोस् | mostbet.com/register/ |

| 🔹लगइन गर्नुहोस् | mostbet.com/?login=1 |

| 💳 निक्षेप विधिहरू | बैंक कार्डहरू, बैंक स्थानान्तरणहरू, क्रिप्टोकरन्सी, भर्चुअल वालेटहरू, भुक्तानी सेवाहरू |

| 💰 निकासी विधिहरू | बैंक कार्डहरू, बैंक स्थानान्तरणहरू, क्रिप्टोकरन्सी, भर्चुअल वालेटहरू, भुक्तानी सेवाहरू |

| ⌛ जम्मा र निकासी गति | केही मिनेट |

| 📱 मोबाइल एप | iOS, Android |

| 📞खेलाडी समर्थन | [email protected], टेलिग्राम, व्हाट्सएप |

Mostbet मा दर्ता

Mostbet ले सट्टेबाजी र क्यासिनोको संसारमा पहुँच खोल्ने सरल र सुविधाजनक दर्ता प्रक्रिया प्रदान गर्दछ। प्लेटफर्ममा दर्ता गर्नका लागि यहाँ चरण-दर-चरण निर्देशनहरू छन्:

- Mostbet वेबसाइटमा जानुहोस्: आफ्नो ब्राउजरमा आधिकारिक Mostbet वेबसाइट खोल्नुहोस्।

- दर्ता बटनमा क्लिक गर्नुहोस्: खोज्नुहोस् र “दर्ता” बटनमा क्लिक गर्नुहोस्, जुन सामान्यतया मुख्य पृष्ठको शीर्षमा अवस्थित हुन्छ।

- दर्ता फारम भर्नुहोस्: आफ्नो व्यक्तिगत जानकारी प्रविष्ट गर्नुहोस् जस्तै पहिलो नाम, थर, इमेल ठेगाना र फोन नम्बर।

- खाता मुद्रा चयन गर्नुहोस्: आफ्नो गेमिङ खाताको लागि रुचाइएको मुद्रा चयन गर्नुहोस्।

- एक प्रयोगकर्ता नाम र पासवर्ड सिर्जना गर्नुहोस्: आफ्नो खाता सुरक्षित गर्न एक अद्वितीय प्रयोगकर्ता नाम र बलियो पासवर्ड सिर्जना गर्नुहोस्।

- दर्ता पुष्टि गर्नुहोस्: प्रमाणीकरण प्रक्रिया पूरा गर्नुहोस्, जसमा इमेल वा एसएमएस प्रमाणीकरण समावेश हुन सक्छ।

- आफ्नो पहिलो जम्मा गर्नुहोस् (वैकल्पिक): सट्टेबाजी वा क्यासिनोमा खेल्न सुरु गर्न आफ्नो खातामा कोष गर्नुहोस्। तपाईले स्वागत बोनसको फाइदा लिन सक्नुहुन्छ।

Mostbet मा खेल सट्टेबाजी

मोस्टबेटमा खेलकुद सट्टेबाजीले विभिन्न प्रकारका छनोटहरू प्रदान गर्दै प्रयोगकर्ताहरूलाई विभिन्न विषयहरू समेट्छ। यस प्लेटफर्ममा शर्त लगाउनका लागि यहाँ केहि लोकप्रिय खेलहरू छन्:

- फुटबल: बाजी लगाउने सबैभन्दा लोकप्रिय खेल, धेरै लिगहरू र अन्तर्राष्ट्रिय प्रतियोगिताहरू प्रस्ताव गर्दै। फुटबल बाजी मा खेल परिणाम, गोल संख्या, व्यक्तिगत खेलाडी उपलब्धिहरु र अधिक समावेश छ।

- बास्केटबल: बास्केटबलले खेलको नतिजा, खेलाडीको अंक, र स्कोरको भिन्नतामा बाजी आकर्षित गर्छ। NBA, Euroleague र राष्ट्रिय च्याम्पियनशिपहरू सट्टेबाजीका लागि मुख्य घटनाहरू हुन्।

- टेनिस: यसको वर्षभरी क्यालेन्डर र ग्रान्ड स्ल्यामदेखि स्थानीय च्याम्पियनसिपसम्मका विभिन्न प्रतियोगिताहरूका कारण लोकप्रिय। बेट्समा खेल विजेता, सेट संख्या र खेलहरू समावेश छन्।

- हक्की: NHL र विश्व च्याम्पियनशिप जस्ता प्रमुख टूर्नामेंटहरूमा हकी बेटिंग विशेष गरी लोकप्रिय छ। खेलको नतिजा, कुल गोलको संख्या र स्कोरको भिन्नतामा बेट्स राखिन्छन्।

- इलेक्ट्रोनिक खेलकुद खेलहरू (eSports): द्रुत रूपमा बढिरहेको खण्ड जसमा Dota 2, CS:GO, लीग अफ लिजेन्ड्स जस्ता खेलहरू समावेश छन्। eSports सट्टेबाजीले मिलान परिणामहरू, कार्ड रणनीतिहरू र व्यक्तिगत खेलाडी उपलब्धिहरू समावेश गर्दछ।

खेल सट्टेबाजी को प्रकार

खेल सट्टेबाजीको संसारमा, त्यहाँ धेरै प्रकारका शर्तहरू छन्, जसमध्ये प्रत्येकको आफ्नै विशेषताहरू छन्:

- एकल बेट्स: घटनाको विशिष्ट नतिजामा बाजी। सरल र स्पष्ट, शुरुआती लागि उपयुक्त।

- एक्युम्युलेटर: धेरै बेट्सको संयोजन जहाँ बाधाहरू गुणा गरेर जितहरू बढाइन्छ। सबै घटनाहरूको सही पूर्वानुमान आवश्यक छ।

- प्रणाली बेट्स: व्यक्त बेट्स जस्तै, तर तपाईंले एक वा धेरै घटनाहरू हारे पनि तिनीहरूले तपाईंलाई जित्न अनुमति दिन्छ।

- ह्यान्डिक्याप बेटिंग: बिन्दुहरूको निश्चित संख्या थपेर वा घटाएर टोलीहरू बीचको बाधाहरूलाई समान बनाउँछ।

- टोट: बेटहरू एक साझा कोषमा सङ्कलन गरिन्छ, र नतिजाहरू सही रूपमा अनुमान गर्ने खेलाडीहरू बीच जीतहरू वितरण गरिन्छ।

Mostbet पदोन्नति र बोनस

मोस्टबेटले गेमिङ अनुभवलाई अझ आकर्षक बनाउने प्रवर्द्धन र बोनसहरूको विस्तृत श्रृंखला प्रदान गर्दछ। यी प्रस्तावहरूमा नयाँ खेलाडीहरूका लागि स्वागत बोनस, रिलोड बोनस, क्यासब्याक र प्रमुख खेलकुद घटनाहरूसँग सम्बन्धित विशेष प्रवर्धनहरू समावेश छन्। बहुमूल्य पुरस्कारहरू सहितका टूर्नामेंटहरू पनि नियमित रूपमा आयोजित हुन्छन्, जसले सक्रिय सहभागीहरूका लागि थप जितका लागि उत्साह र अवसरहरू थप्छ।

- स्वागत बोनस: नयाँ खेलाडीहरूले दर्ता गरेपछि उदार बोनस पाउँछन्।

- जम्मा बोनसहरू: बोनसको साथ तपाईंको जम्मा बढाउने नियमित अवसरहरू।

- Freebets: खेलकुद घटनाहरूमा नि: शुल्क दांव।

- क्यासब्याक: वफादार ग्राहकहरूको लागि हराएको रकम फिर्ता।

- प्रतियोगिता र उपहारहरू: थप पुरस्कार र नगद जित्ने मौका।

- विशेष प्रचारहरू: सबै खेलाडीहरूको लागि नियमित विशेष प्रस्तावहरू।

- VIP कार्यक्रम: सबैभन्दा सक्रिय खेलाडीहरूको लागि विशेष बोनस र पुरस्कारहरू।

- जन्मदिन: तपाईंको जन्मदिन मनाउन विशेष उपहारहरू।

Mostbet को साथ, सबैजनाले खेललाई अझ रमाइलो र लाभदायक बनाउने कुरा फेला पार्न सक्छन्।

अनलाइन क्यासिनो Mostbet

Mostbet अनलाइन क्यासिनोले प्रयोगकर्ताहरूलाई यसको विविधता र खेल अनुभवको गुणस्तरको साथ आकर्षित गर्दछ। यहाँ यस क्यासिनोको मुख्य विशेषताहरू छन्:

- खेलहरूको फराकिलो विविधता: प्रयोगकर्ताहरूले धेरै प्रकारका स्लटहरू, टेबल गेमहरू र लाइभ डिलर गेमहरूको आनन्द लिन सक्छन्।

- सुरक्षा र विश्वसनीयता: Mostbet ले डाटा सुरक्षा र निष्पक्ष गेमिङ अनुभवको उच्च स्तर सुनिश्चित गर्दछ।

- उदार बोनस र पदोन्नतिहरू: क्यासिनोले तपाइँको जित्ने मौकाहरू बढाउन नियमित रूपमा बोनस र पदोन्नतिहरू प्रदान गर्दछ।

- मोबाइल अनुकूलता: तपाईले आफ्नो कम्प्युटरमा मात्र होइन, मोबाइल अनुप्रयोग मार्फत पनि मोस्टबेट खेल्न सक्नुहुन्छ।

- ग्राहक समर्थन: 24/7 ग्राहक समर्थन सधैं कुनै पनि समस्या संग मद्दत गर्न तयार छ।

क्यासिनो खेलहरू

क्यासिनो खेलहरू रोमाञ्चक मनोरञ्जन हुन् जसले धेरै जुवा खेल्नेहरूलाई आकर्षित गर्दछ। पोकर र रुलेटदेखि स्लट मेसिन र ब्ल्याकज्याकसम्म, क्यासिनोले विभिन्न मनोरञ्जन विकल्पहरू प्रदान गर्दछ। यहाँ सबैभन्दा लोकप्रिय खेलहरूको सूची छ:

- पोकर: रणनीतिकारहरू र विश्लेषकहरूका लागि एउटा खेल जहाँ तपाईं यस क्लासिक खेलको विभिन्न भिन्नताहरूमा अन्य खेलाडीहरूसँग प्रतिस्पर्धा गर्न सक्नुहुन्छ।

- रूलेट: पाङ्ग्रा घुमाउनु र नम्बरहरूमा सट्टेबाजी गर्नु एक रोमाञ्चक खेल हो जसले धेरै खेलाडीहरूको मन जितेको छ।

- Blackjack: एउटा कार्ड खेल जसमा कार्डहरू गणना गर्ने र रणनीतिक निर्णयहरू गर्ने क्षमता चाहिन्छ।

- स्लट मेसिनहरू: सरल र रमाईलो, तिनीहरूले विशाल ज्याकपटहरू र धेरै रमाइलो प्रस्ताव गर्छन्।

- Baccarat: न्यूनतम रणनीतिको साथ एक कार्ड खेल जहाँ भाग्यले मुख्य भूमिका खेल्छ।

- केनो: एउटा लटरी खेल जहाँ तपाइँ नम्बरहरू छान्नुहुन्छ र खेलको लागि आशा गर्नुहुन्छ।

- स्क्र्याच कार्डहरू: तपाईंले जित्नुभएको छ वा छैन भनी हेर्नको लागि कभर छोप्नुहोस्।

- भाग्यको पाङ्ग्रा: पाङ्ग्रा घुमाउनुहोस् र पुरस्कारको आशा गर्नुहोस्।

- भिडियो पोकर: पोकर र स्लट मिसिनहरूको तत्वहरू संयोजन गर्दछ।

- Cic Bo: एक परम्परागत एसियाली खेल जहाँ पासा घुमाइन्छ र बाजी राखिन्छ।

- Craps: पासा र उत्साह संग रणनीति।

- पाई गाउ पोकर: चिनियाँ जरा भएको पोकरको भिन्नता।

- पासा: एक सरल र रमाईलो पासा खेल।

- लोटरीहरू: ठूला पुरस्कारहरू जित्ने सजिलो तरिका।

- बिंगो: बल र कार्ड संग सामाजिक खेल।

यी प्रत्येक खेलहरू अद्वितीय रमाइलो र जित्ने मौकाहरू प्रदान गर्दछ, र तपाईंले ती सबैलाई आफ्नो मनपर्ने फेला पार्न प्रयास गर्नुपर्छ!

प्रत्यक्ष क्यासिनो खेलहरू

लाइभ क्यासिनो खेलहरू तपाईंको घर नछोडिकन उत्साह र क्यासिनो वातावरणको संसारमा आफूलाई डुबाउने एउटा रोमाञ्चक अवसर हो। प्रत्यक्ष डिलरहरू, वास्तविक कार्डहरू र वास्तविक-समय रूलेटहरूसँग, तिनीहरूले एक प्रामाणिक गेमिङ अनुभव प्रदान गर्छन्।

स्लटहरू

Mostbet मा स्लटहरूले खेलाडीहरूलाई उत्साह र विविधताको एक आकर्षक संसार प्रदान गर्दछ। यो विभिन्न विषयवस्तुहरू र बोनसहरूका साथ स्लट मेसिनहरूको विशाल चयन हो जसले तपाईंलाई रमाइलोको अविस्मरणीय क्षणहरू दिनेछ। मोस्टबेट स्लटहरू ठूला ज्याकपटहरू जित्न र रोमाञ्चक खेलहरूको आनन्द लिनको लागि तपाईंको गेटवे हुन्।

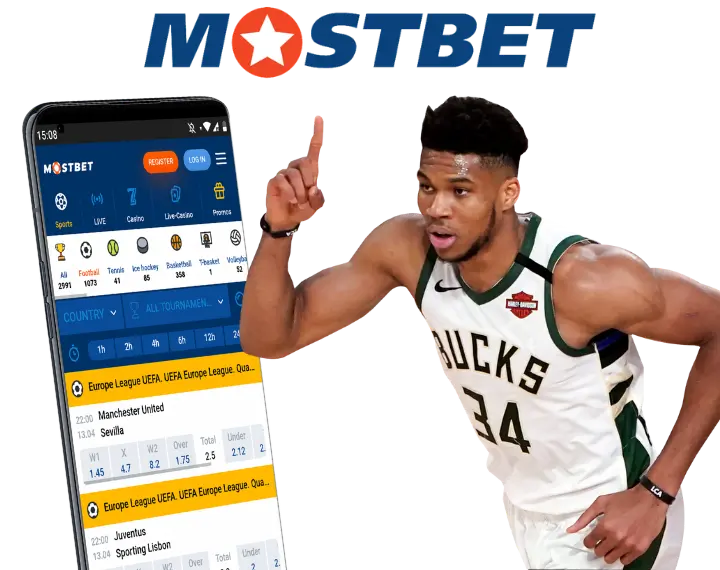

Mostbet को मोबाइल संस्करण

मोस्टबेटको मोबाइल संस्करण कुनै पनि समय र जहाँ पनि खेलकुदमा उत्साह र सट्टेबाजीको आनन्द लिन चाहनेहरूका लागि सुविधाजनक र आधुनिक समाधान हो।

- सुविधाजनक र पहुँचयोग्य: मोबाइल संस्करण जुनसुकै समयमा, कहीं पनि उपलब्ध छ, खेलाडीहरूलाई यात्रामा मनोरन्जनको आनन्द लिन अनुमति दिन्छ।

- सुव्यवस्थित इन्टरफेस: सहज र प्रयोग गर्न सजिलो इन्टरफेसले आरामदायक गेमिङ अनुभव सुनिश्चित गर्दछ।

- बेट्समा द्रुत पहुँच: एक क्लिक र तपाइँ पहिले नै तपाइँको बाजी राख्दै हुनुहुन्छ।

- खेल र खेल घटनाहरूको विस्तृत चयन: तपाईंको जेबमा धेरै खेल र खेल सट्टेबाजी।

- बोनस र प्रचारहरू: मोबाइल प्रयोगकर्ताहरूले प्राय: विशेष प्रस्तावहरू र बोनसहरू प्राप्त गर्छन्।

- सुरक्षा र गोपनीयता: तपाईं आफ्नो डाटा र वित्तीय लेनदेन को भरपर्दो सुरक्षा मा भरोसा गर्न सक्नुहुन्छ।

- 24/7 समर्थन: व्यावसायिक समर्थन टोली कुनै पनि समयमा मद्दत गर्न तयार छ।

- स्वचालित अपडेटहरू: एप सधैं उत्कृष्ट गेमिङ अनुभव प्रदान गर्न अद्यावधिक गरिन्छ।

- भुक्तानी विधिहरूको विविधता: रकम जम्मा गर्ने र निकाल्ने सुविधाजनक विधि छनौट गर्ने सम्भावना।

- विभिन्न यन्त्रहरूसँग उपयुक्त: विभिन्न अपरेटिङ सिस्टमहरूमा चल्ने स्मार्टफोन र ट्याब्लेटहरूको लागि उपयुक्त।

कोष जम्मा गर्ने र निकाल्ने विधिहरू Mostbet

मोस्टबेट नेपाल प्लेटफर्ममा जम्मा गर्ने र निकासी गर्ने तरिकाहरू उपलब्ध विकल्पहरू र बुकमेकरको हालको नियमहरूको आधारमा भिन्न हुन सक्छन्। यहाँ केहि सम्भावित तरिकाहरू छन्:

- बैंक कार्डहरू: तपाईंले आफ्नो मोस्टबेट खातामा रकम जम्मा गर्न र झिक्न क्रेडिट वा डेबिट कार्डहरू प्रयोग गर्न सक्नुहुन्छ।

- ई-वालेटहरू: लोकप्रिय ई-वालेटहरू जस्तै Skrill, Neteller वा PayPal जम्मा र निकासीको लागि उपलब्ध हुन सक्छ।

- बैंक स्थानान्तरण: तपाईले आफ्नो बैंक खातालाई तपाईको Mostbet खातामा लिङ्क गरेर बैंक स्थानान्तरण मार्फत वित्तीय लेनदेन गर्न सक्नुहुन्छ।

- मोबाइल भुक्तानी: क्षेत्रको आधारमा, मोबाइल भुक्तानी प्रणालीहरू मोबाइल उपकरणहरू प्रयोग गरेर रकम जम्मा गर्न उपलब्ध हुन सक्छन्।

- क्रिप्टोकरन्सीहरू: केही बुकमेकरहरूले वित्तीय लेनदेनको लागि बिटकोइन जस्ता क्रिप्टोकरन्सीहरू प्रयोग गर्ने विकल्प प्रस्ताव गर्छन्।

कृपया याद गर्नुहोस् कि नियमहरू र उपलब्ध विधिहरू परिवर्तन हुन सक्छन्, त्यसैले यसलाई आधिकारिक Mostbet वेबसाइटमा नवीनतम जानकारी जाँच गर्न वा नेपालमा जम्मा र निकासी विधिहरूको विस्तृत जानकारीको लागि समर्थनलाई सम्पर्क गर्न सिफारिस गरिन्छ।

ग्राहक सेवा

कुनै पनि अनलाइन क्यासिनोमा सफल गेमिङ अनुभवको महत्त्वपूर्ण अंश गुणस्तरीय ग्राहक समर्थन हो। यहाँ मोस्टबेट ग्राहक समर्थनले तपाईंलाई विश्वसनीयता र आरामको ग्यारेन्टी दिन्छ:

- 24/7 उपलब्धता: ग्राहक समर्थन दिनको 24 घण्टा उपलब्ध छ, तपाईंको प्रश्नहरूको जवाफ दिन र तपाईंलाई आवश्यक परेको बेला मद्दत गर्न तयार छ।

- व्यावसायिकता: अनुभवी विशेषज्ञहरू कुनै पनि प्राविधिक वा गेमिङ समस्याहरू समाधान गर्न तयार छन्।

- बहु सञ्चार विधिहरू: तपाईं च्याट, फोन वा इमेल मार्फत समर्थन सम्पर्क गर्न सक्नुहुन्छ।

- द्रुत प्रतिक्रिया: मुद्दाहरू तुरुन्तै समाधान गरिन्छ ताकि तपाईं कुनै ढिलाइ बिना खेल जारी राख्न सक्नुहुन्छ।

- खेल र बेट्समा मद्दत गर्नुहोस्: विशेषज्ञहरूले तपाईंलाई खेल र बेट्सका नियमहरू बुझ्न मद्दत गर्नेछन्।

Mostbet ग्राहक समर्थन सधैं तपाइँलाई तपाइँको गेमिङ अनुभव उच्चतम स्तर को सुनिश्चित गर्न को लागी आवश्यक सबै जानकारी र समर्थन प्रदान गर्न को लागी तयार छ।

FAQ

मोस्टबेट नेपालको वेबसाइटमा दर्ता गर्न, आधिकारिक वेबसाइटमा जानुहोस्, "रेजिस्ट्रेसन" मा क्लिक गर्नुहोस् र आवश्यक फिल्डहरू भर्नुहोस्।

Mostbet ले फुटबल, क्रिकेट, बास्केटबल र अन्य लोकप्रिय खेलहरू लगायत खेलकुद शर्तहरूको विस्तृत श्रृंखला प्रदान गर्दछ।

Mostbet ले स्वागत बोनस, जम्मा बोनस र खेल प्रवर्द्धन सहित विभिन्न प्रकारका बोनसहरू प्रदान गर्दछ।

Mostbet ले विभिन्न विधिहरू प्रदान गर्दछ जस्तै बैंक कार्डहरू, ई-वालेटहरू र मोबाइल भुक्तानीहरू।

तपाईंले लाइभ च्याट, इमेल वा फोन नम्बर मार्फत Mostbet ग्राहक समर्थनलाई तिनीहरूको आधिकारिक वेबसाइटमा उपलब्ध गराउन सक्नुहुन्छ।